Latest updates:

- 2022-11-11: The paper notification has been sent out on November 11, 2022. Please check you spam folder if you have not received the email. Please consider the comments from reviewers while revising the manuscript. Thank you!

- 2022-11-09: Our paper, "Citywide reconstruction of traffic flow using the vehicle-mounted moving camera in the CARLA driving simulator," was recently published at the 25th IEEE International Conference on Intelligent Transportation Systems (IEEE ITSC 2022).

- 2022-10-23: The paper submission portal is now open. Please submit your paper using the: IEEE BigData Cup Paper Submission Portal.

- 2022-10-14: We have announced the winners of the VOD2022 challenge following the source code verification. Please check the leaderboard for more information.

- 2022-10-11:

We have closed the Google form for the source code submission. Thank you very much for participating! The top five teams are invited to submit papers. Papers must follow the IEEE conference template format as described here: Manuscript Templates for Conference Proceedings. The deadline for the paper submission is October 30. On

2022-10-112022-10-14, we will update the website with the final winners. - 2022-10-02: Submissions for the competition have now closed. Thank you very much for participating. Please submit your source code/relevant files through this: Google Form

- 2022-09-08:The submission portal for test-2 is now open. The competition will end on September 30, 2022.

2022-09-012022-09-02: Test dataset (test-2) containing 1,500 images is released. The submission portal for test-2 will open on September 8, 2022. Submissions for both test-1 and test-2 will continue to run in parallel until the end of the competition.- 2022-09-01: We have clarified Rule 2 of the competition. Please only take care not to use real-world images with both vehicle class and orientation annotations. ImageNet, COCO, etc., pre-trained weights are allowed.

- 2022-09-01: We have added the reference to our recently published paper in Special Issue – Street-level Imagery Analytics and Applications of the ISPRS Journal of Photogrammetry and Remote Sensing Journal.

- 2022-08-19: In case you are unable to participate in the challenge due to the lack of GPU computing/storage resources, please contact us: ashutosh@iis.u-tokyo.ac.jp

2022-06-302022-07-06: Training dataset (train-2) containing more than 33,000 annotations of vehicles in 12,940 images is released.- 2022-06-10: Training dataset (train-1) is released along with the test dataset (test-1).

- 2022-06-10: Website of the Big Data Cup challenge opens, and task is revealed.

Introduction:

Vehicle class and orientation detection in the real-world using synthetic images from driving simulators

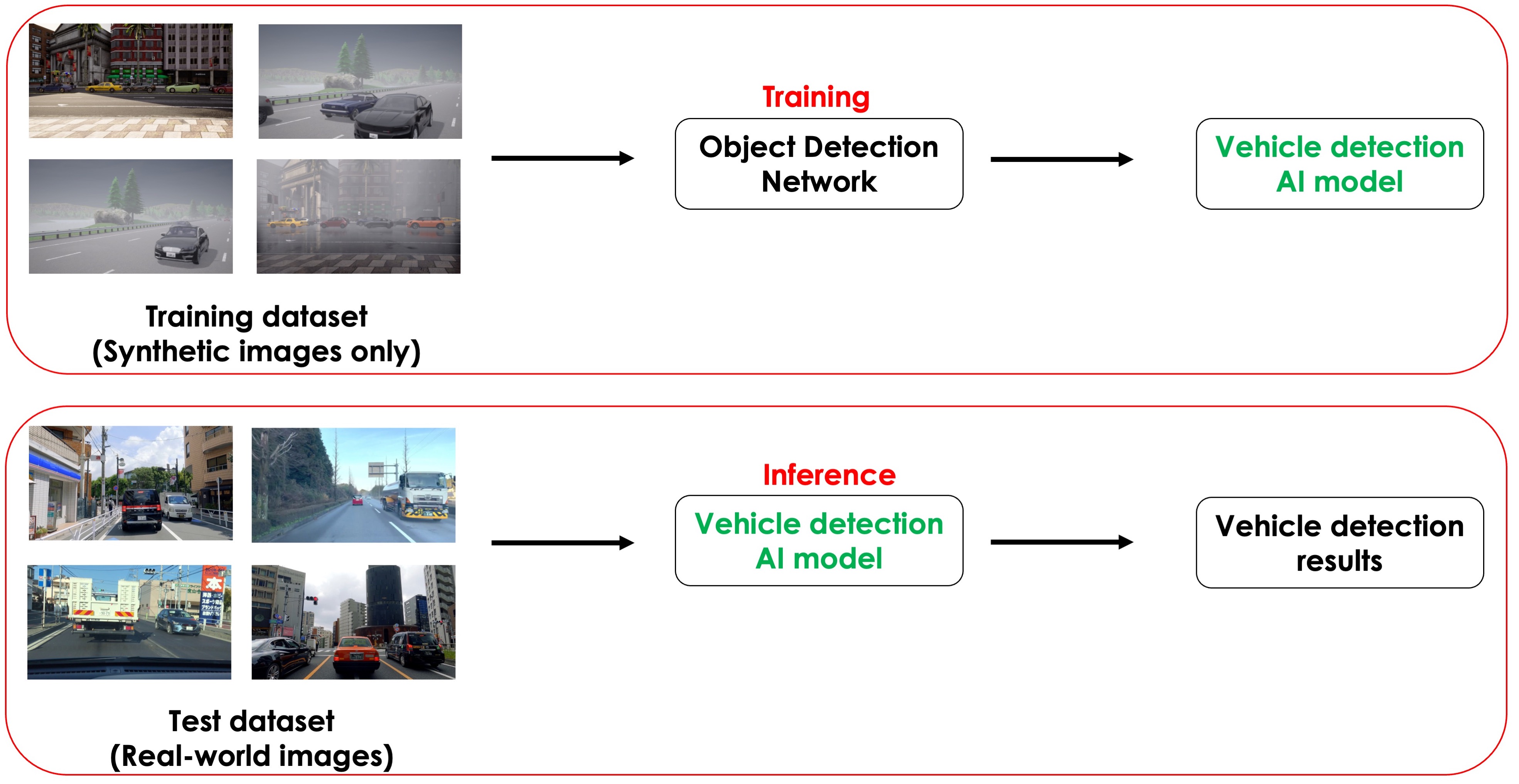

Training Convolutional Neural Networks (CNNs)/Vision-based Transformers for object detection tasks requires huge amounts of annotated objects in several images [1, 2]. Collecting and annotating data sets in the real world for object detection tasks are time-consuming and expensive. Urban driving simulators such as Car Learning to Act (CARLA), [3] AirSim [4], etc., and video games such as Grand Theft Auto (GTA) “V” provide highly realistic virtual environments. Such driving simulators can be used to automatically annotate objects such as vehicles in the images. In addition, driving simulators can be used to dynamically vary the conditions, such as lighting, weather, and surroundings, leading to a rich variety of image datasets for robustness. The proposed BigData Cup 2022 Challenge aims to explore numerous techniques that can improve object detection models for real-world images by using a model trained on a synthetic dataset. The main objectives of the BigData Cup 2022 challenge are as follows:

- To modify/develop object detection neural networks to improve real-world vehicle detections using models trained on synthetic datasets prepared in a simulator.

- To examine the effect of pixel-based image augmentation techniques to generate photo-realistic images on detection results in the real world.

- Study the effect of synthetic images on improving object class detections with fewer annotations.

In the proposed BigData Cup challenge, real-world vehicle detection would be evaluated using a trained model based on the synthetic dataset. As part of this challenge, we will release a large-scaled annotated dataset prepared in a driving simulator CARLA and test the trained model’s accuracy against the real-world dataset [5]. While this challenge focuses on improving vehicle detection for autonomous driving and ITS applications, the developed techniques may be applied to various domains of object detection using computer vision algorithms

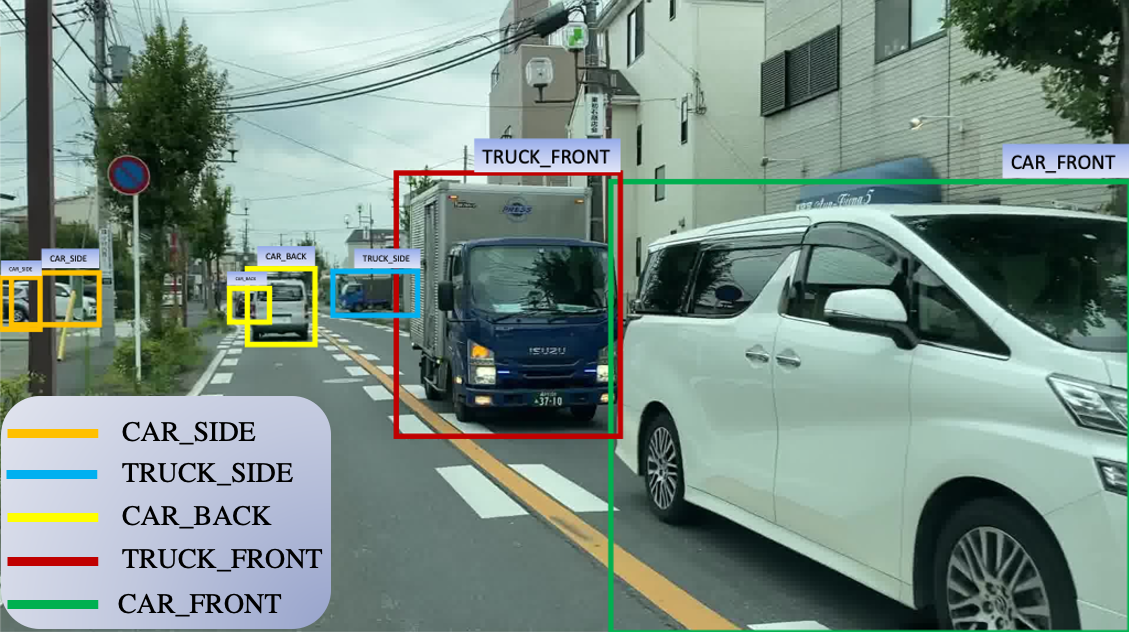

The Vehicle Orientation Dataset: Real and Synthetic

In the proposed BigData Cup challenge, two types of data sets will be utilized: the Vehicle Orientation (VO) dataset [5, 6] and a synthetic Vehicle Orientation (Synthetic VO) dataset [7]. The VO dataset contains over 210,000 real-world images with over one million annotations, with both orientation and vehicle class available simultaneously. The VO dataset is published with our research work "Citywide reconstruction of cross-sectional traffic flow from moving camera videos" [5] and is publicly available at <https://github.com/sekilab/VehicleOrientationDataset>. We have released the Synthetic VO dataset [7] prepared under diverse environments prepared in the CARLA driving simulator as part of this challenge. The Synthetic VO dataset is similar to the original VO dataset, except it is prepared in a simulated environment.

BigData Cup Challenge:

The challenge of this competition is to check the compatibility and improve the detection of vehicles in real-world scenarios using a model trained solely on photo-realistic images prepared in driving simulators. For this challenge, participants are required to train a model using the synthetic images such as those in Synthetic VO dataset [7], the performance of which will be evaluated on the real-world VO dataset [5]. To improve the detection results, the participants are encouraged/allowed to use extra training images prepared only in a simulated environment. There are no restrictions on using the type of simulator such as CARLA [3] AirSim [4], GTA, etc.

Citations:

Please cite the following when you utilize the resources (dataset, content, etc. ).

- Kumar, A., Kashiyama, T., Maeda, H., Omata, H., & Sekimoto, Y. (2022, October). Citywide reconstruction of traffic flow using the vehicle-mounted moving camera in the CARLA driving simulator. In 2022 IEEE 25th International Conference on Intelligent Transportation Systems (ITSC) (pp. 2292-2299). IEEE.

- Kumar, A., Kashiyama, T., Maeda, H., Omata, H., & Sekimoto, Y. (2022). Real-time citywide reconstruction of traffic flow from moving cameras on lightweight edge devices ISPRS Journal of Photogrammetry and Remote Sensing, 192, 115-129.

- Kumar, A., Kashiyama, T., Maeda, H., & Sekimoto, Y. (2021, December). Citywide reconstruction of cross-sectional traffic flow from moving camera videos. In 2021 IEEE International Conference on Big Data (Big Data) (pp. 1670-1678). IEEE.

Important dates:

- June 10, 2022: Website of the challenge opens, and the task is revealed.

- June 10, 2022: Training dataset (train-1) along with the test dataset (test-1) is released.

- June 25, 2022: The submission portal opens. Participants can now register their team and submit their solutions for evaluation.

June 30, 2022July 6, 2022: Additional training dataset (train-2) is released.- September 1, 2022: Second test dataset (test-2) is released.

- September 30, 2022: BigData Cup challenge ends. Participants should submit their source code and solutions.

- October 10, 2022: Announcement of winning teams and invitation for paper submission for the special track during IEEE BigData 2022 conference.

- October 30, 2022: Deadline for the submission of the papers.

- November 10, 2022: Notification of paper acceptance.

- November 20, 2022: Camera-ready version of accepted papers. (Firm deadline)

- December 17 – December 20, 2022: IEEE BigData 2022 Conference, Osaka, Japan.

References:

- LeCun, Y., Bengio, Y., & Hinton, G. (2015). Deep learning. nature, 521(7553), 436-444.

- Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T.,..& Houlsby, N. (2020). An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929.

- Dosovitskiy, A., Ros, G., Codevilla, F., Lopez, A., & Koltun, V. (2017, October). CARLA: An open urban driving simulator. In Conference on robot learning (pp. 1-16). PMLR.

- Shah, S., Dey, D., Lovett, C., & Kapoor, A. (2018). Airsim: High-fidelity visual and physical simulation for autonomous vehicles. In Field and service robotics (pp. 621-635). Springer, Cham.

- Kumar, A., Kashiyama, T., Maeda, H., & Sekimoto, Y. (2021, December). Citywide reconstruction of cross-sectional traffic flow from moving camera videos. In 2021 IEEE International Conference on Big Data (Big Data) (pp. 1670-1678). IEEE.

- Kumar, A., Kashiyama, T., Maeda, H., Omata, H., & Sekimoto, Y. (2022). Real-time citywide reconstruction of traffic flow from moving cameras on lightweight edge devices. ISPRS Journal of Photogrammetry and Remote Sensing, 192,115-129.

- Kumar, A., Kashiyama, T., Maeda, H., Omata, H., & Sekimoto, Y. (2022, October). Citywide reconstruction of traffic flow using the vehicle-mounted moving camera in the CARLA driving simulator. In 2022 IEEE 25th International Conference on Intelligent Transportation Systems (ITSC) (pp. 2292-2299). IEEE.